In 2026, AI gives clear answers quickly, but you may notice that only a few websites are used again and again.

This happens because AI models do not trust content the way humans do. They don’t reward effort, rankings, or even accuracy alone. They follow their own logic to decide which sources feel reliable enough to use when answering questions at scale.

In this blog, you’ll learn how AI models decide which sources to trust, how AI models think when evaluating content, and why visibility in 2026 depends more on clarity, consistency, and structure than traditional SEO signals.

AI Models Do Not “Browse” the Internet Like Humans

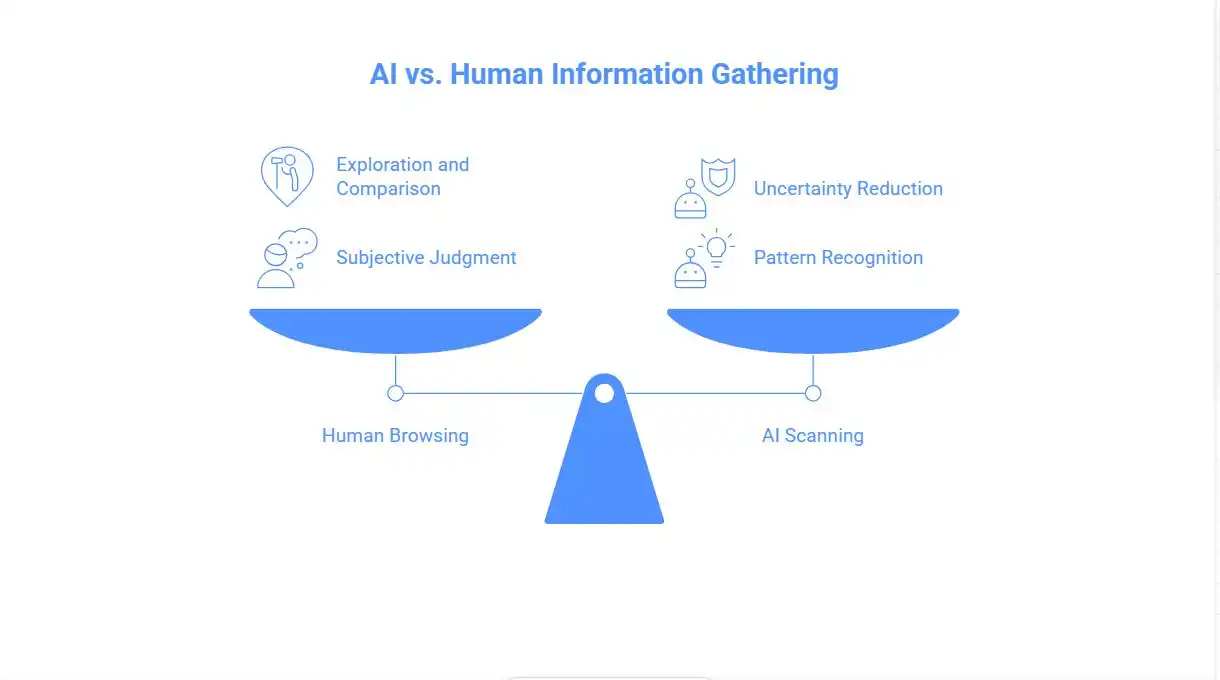

One of the biggest misunderstandings is thinking that AI reads the web the way people do. Humans scroll, compare opinions, and decide what feels right. AI does none of that.

AI models scan content to reduce uncertainty. Their goal is not exploration. Their goal is confidence. They want to give answers that feel stable and safe.

This changes everything.

When you understand how AI models think, you realise they are not looking for creativity first. They are looking for patterns that suggest reliability.

That is why some websites are repeatedly used, while others are quietly ignored.

Trust for AI Means Predictability, Not Popularity

For humans, trust can come from emotion, storytelling, or brand love. For AI, trust is mathematical and behavioural.

AI models trust sources that behave in predictable ways over time.

This includes:

- Explaining topics the same way again and again

- Staying within a clear subject area

- Avoiding sudden shifts in tone or opinion

A site that changes direction often becomes risky for AI. Even if the content is good, inconsistency makes it harder to rely on.

In 2026, trusted sources are not always the biggest. They are the most stable.

Why Clear Content Is Easier for AI to Trust

AI models prefer content they can compress without losing meaning. This is why clarity matters more than style.

When content is:

- Clearly structured

- Direct in explanation

- Focused on one idea at a time

AI can safely summarise it.

But when content jumps between ideas, mixes opinion with facts, or hides the point deep inside long paragraphs, AI hesitates. The risk of misrepresenting the source becomes higher.

This is a core rule of AI content evaluation:

If it’s hard to summarise, it’s hard to trust.

Authority Is Detected, Not Claimed

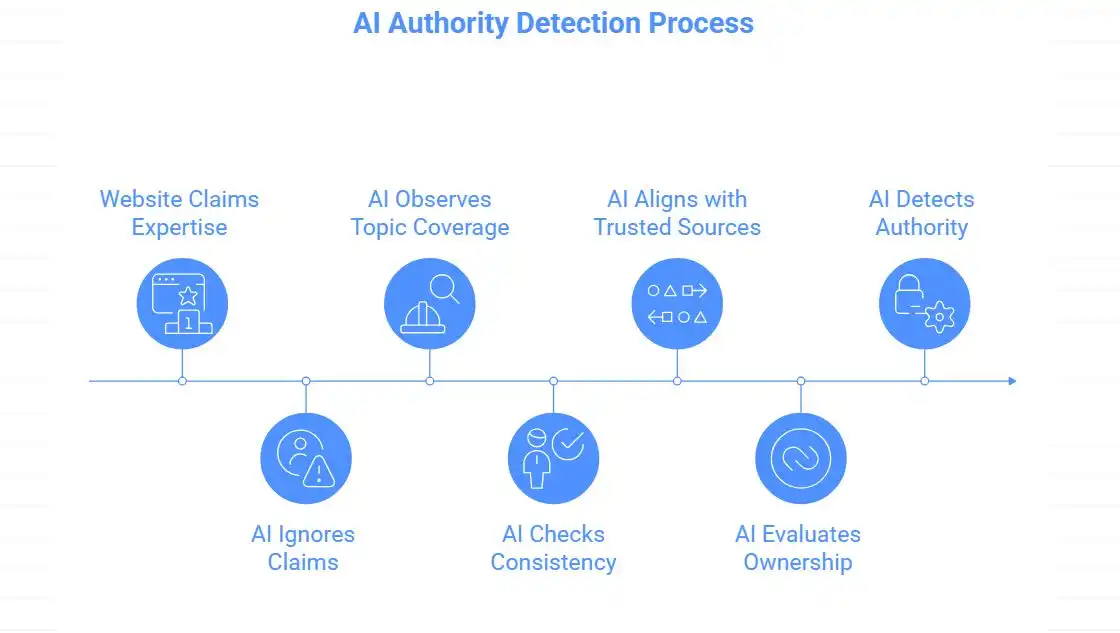

Many websites try to tell AI that they are experts. AI ignores these claims.

AI models look for indirect proof.

They observe:

- How deeply a topic is covered across multiple pages

- Whether explanations stay consistent across time

- If other trusted sources align with similar framing

- Whether the site shows clear ownership and responsibility

Authority is built slowly. AI notices patterns, not headlines.

This is why small, focused websites sometimes appear in AI answers while large, general sites do not.

Why Consistency Often Beats Original Ideas

From a human view, new ideas feel exciting. From an AI view, they feel risky.

AI models are trained to avoid mistakes. They prefer explanations that match the current shared understanding of a topic.

This does not mean AI hates originality. It means originality must be supported clearly.

If a new idea:

- Fits within existing knowledge

- Is explained carefully

- Is connected to known concepts

AI can use it.

But if an idea feels isolated or unclear, AI will avoid it, even if it is smart.

This is one reason why “unique” content sometimes fails to appear in AI summaries.

Entity Clarity Plays a Bigger Role Than Most Realise

AI does not judge content only at the page level.

It also evaluates the entity behind the content.

An entity can be:

- a brand

- a person

- a product

- a clearly defined concept

When AI cannot clearly understand who is responsible for the content, trust becomes harder to build.

This is why websites without a clear brand identity, visible authorship, or a consistent focus are difficult for AI to rely on. Even useful content becomes risky when its source feels unclear.

Anonymous usefulness is fragile. Recognisable expertise feels safer and more dependable.

In 2026, entity clarity works quietly as a trust signal, even when users never see or click the source.

Why Generic Content Is Easy to Ignore

A hard truth is that correct content alone is not enough. During AI content evaluation, accuracy is expected, not rewarded.

If your content says the same thing as hundreds of other pages, AI gains nothing by using it. AI models quietly ask one simple question:

“Does this source help me explain better?”

If the answer is no, the content is skipped. This is why many SEO-driven articles fail in AI environments, especially when businesses rely on outdated strategies rather than working with an experienced SEO company that understands how AI evaluates content.

AI does not reward repetition. It rewards clarity, usefulness, and the ability to improve understanding.

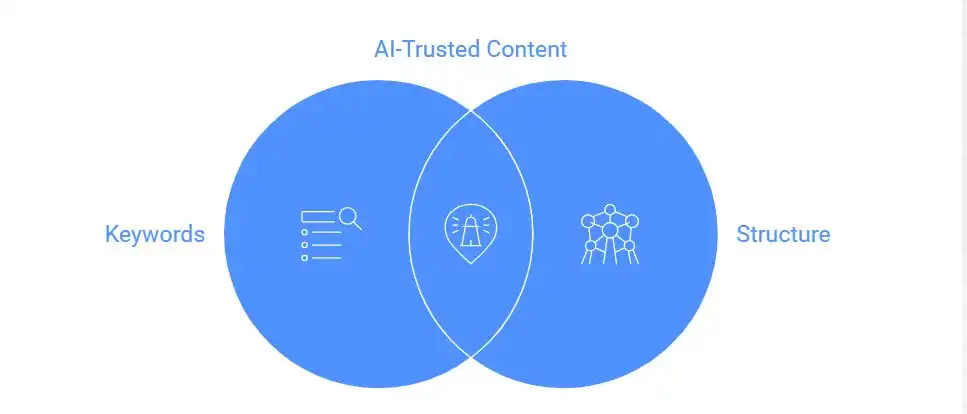

Structural Signals Matter More Than Keywords

Traditional SEO focused heavily on keywords. AI focuses on relationships.

AI looks at:

- How ideas connect

- How explanations build on each other

- Whether the content forms a clear knowledge path

Well-linked content clusters are easier for AI to trust than isolated posts.

This is why content hubs perform better than one-off blogs. AI prefers systems of knowledge over single answers.

In AI content evaluation, structure is a trust signal.

Why Some Good Content Is Still Ignored

This is the most frustrating part for many creators.

Content can be accurate, helpful, and well-written, and still be ignored by AI.

This usually happens when:

- The site lacks a clear topic focus

- The brand is not recognisable

- The explanation is correct, but basic

From the AI’s point of view, this is not unfair.

AI is not trying to be inclusive. It is trying to be confident.

If including a source increases uncertainty, even slightly, that source will be avoided.

AI Prefers Fewer Sources, Not More

Humans like multiple opinions. AI prefers fewer, stronger ones.

When generating answers, AI models aim to reduce noise. They choose a small set of sources that feel reliable enough to stand in for the whole web.

This is why being “one of many” is dangerous.

In 2026, visibility means being among the few AI feels safe relying on.

Influence Without Visibility Is a New Reality

Another uncomfortable shift is this: AI may learn from your content without showing your name.

Your ideas influence answers.

Your traffic does not increase.

Traditional SEO measured success through clicks. AI search breaks that link.

This creates a new challenge: how to build influence and recognition.

The solution is not chasing mentions. It is becoming so clearly authoritative that AI can confidently attach your name to the idea.

What This Means for Content Strategy in 2026

Understanding how AI models decide which sources to trust changes how content should be created.

The goal is no longer just ranking or engagement.

The real goal is to be:

- easy to understand

- easy to summarise

- easy to classify

- easy to trust

To reach this, the content needs:

- depth instead of volume

- clarity instead of clever writing

- consistency instead of constant change

- identity instead of anonymity

Content built this way works well for humans and machines at the same time.

The Silent Shift Businesses Often Miss

The biggest change is not technical. It is mental.

Search is no longer only about being found.

It is about being chosen.

AI makes that choice quietly, continuously, and at scale.

If your content does not give AI a clear reason to rely on it, it will slowly disappear from AI-driven discovery.

Final Thought

AI does not ignore sources out of bias or intention. It ignores them because they are difficult to trust during AI content evaluation. When information feels unclear, inconsistent, or risky, AI systems simply move on to safer options.

In 2026, visibility is earned by becoming reliable, predictable, and easy to understand at scale. Creating clear content for AI models means focusing less on optimisation tricks and more on structure, consistency, and identity that AI can confidently rely on.

The real question is no longer, “Why isn’t AI showing my content?”

It is, “Have I made my content easy for AI to trust at all?”